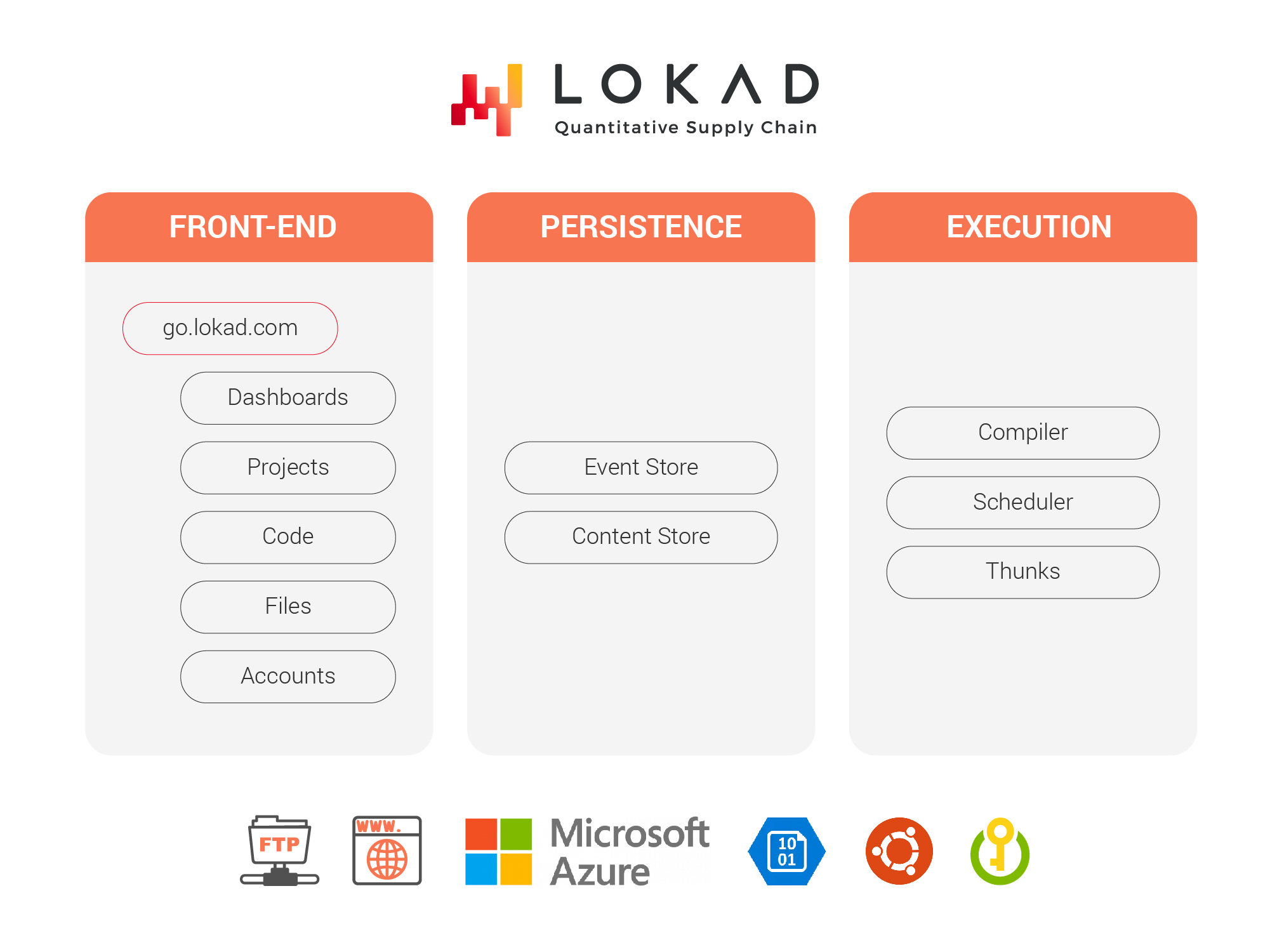

Architecture of the Lokad platform

Lokad’s platform is a multi-tenant cloud-hosted SaaS solution. This page presents the high-level architecture of the platform. Though the page is intended for an IT audience, a tech-savvy supply chain practitioner may find this information interesting, as this architecture reflects our technological vision for the predictive optimization of supply chains.

Architecture overview

Lokad presents itself as an environment to develop and operate predictive optimization apps intended for supply chain problems. At its core lies a domain-specific language (DSL) named Envision, engineered by Lokad. Envision is accessible to end-users and most capabilities are delivered through it. While Envision implies programming, Lokad is intended for supply chain specialists, not IT specialists or software engineers.

The Lokad platform is multi-tenant: the same app serves all our clients and it includes a short series of services. The granularity of the split is primarily motivated by diverging requirements in terms of reliability, security, and performance of each service – rather than by a pure functional split. Indeed, the level of coupling that exists between these services is relatively high. These services share the same Git code repository, and they are frequently upgraded together as well.

Our codebase is implemented in F#, C# and TypeScript with few third-party dependencies beyond .NET, an open-source framework developed by Microsoft. Moreover, apart from .NET itself, we have very few other dependencies (approximately an order of magnitude fewer than the norm).

This architecture diverges extensively from the “usual” architectures found among enterprise apps – and for good reasons. The common enterprise app depends on heavy and extensive dependencies; each dependency typically weighing more than 1 million lines of code. Lokad, however, lacks third-party dependencies in the following areas:

- Relational database: Instead, we use an event store along with a content addressable store.

- Caching system: Instead, we colocalize compute and transient storage.

- Pipeline manager: Instead, we have our own scheduler (detailed below)

- Analytics engine: Instead, our DSL named Envision provides analytics capabilities.

- Machine learning toolkit: Instead, the DSL also provides ML capabilities.

- Data visualization toolkit: Instead, we have rolled out our own, with tight DSL integration.

It should be noted that, while we extensively internalize the development of our platform, we intentionally and exclusively rely on third-party components for all our security components, like cryptographic algorithms.

The front-end

The front-end refers to the elements that are accessible to end-users, including automated agents used to move data to and from Lokad. Most of these interactions happen via a web browser through HTTPS, although Lokad also supports FTPS and SFTP protocols to move files.

go.lokad.com

This service hosts React, the front-end framework of Lokad’s webapp, implemented as a single-page application (SPA). This front-end depends on the APIs (application programming interfaces) provided by the other services.

This service exclusively serves static content - mostly JavaScript. There are no server-side moving parts, and, notably, no data persistence. This design is intentional as uptime is the biggest priority for this service; no matter which service is accessed through the web, the service go.lokad.com must be available.

Dashboards

The dashboards service, as the name suggests, takes care of rendering the web analytical dashboards provided by Lokad.

Each dashboard is the result of an executed Envision script. Dashboards are extensively pre-computed, although some interactive capabilities are offered as well. The design of Envision itself ensures that client-side interactions remain small data problems, no matter the size of the original dataset.

Client-side, all the data needed for the first rendering of the dashboard is obtained through a single HTTPS request. This single-request design serves to minimize latency overhead. Binary packing and compression minimize bandwidth consumption.

Server-side, the data associated with a given dashboard is packed by the content store. Again, packing is essential in order to keep the total number of network requests very low, normally below half a dozen – independent of dashboard complexity.

Interactive dashboards give the end-user the possibility to access large datasets, beyond what would be achievable to transfer to - and display through - a browser. Switching from one view to the next requires at most a single client-side request (and very few server-side ones).

Lokad vs mainstream

The constant rendering time design of Lokad differs from mainstream business intelligence (BI) and other analytical tools. The dashboards one finds in such tools are invariably made of a list of tiles, sometimes called blocks or widgets. The standard way to deal with these tiles involves 1 client-side request per tile, followed by an unspecified/unguaranteed number of server-side requests. Unfortunately, this design leads to sluggish dashboards with a noticeable delay for each tile. Further, the complete display of all the dashboard’s tiles frequently takes several seconds.

Lokad eliminates this problem by having the Envision compiler produce a packing strategy of the data used to render the dashboard, thus ensuring both single-digit requests on the client side, and even fewer on the server side. From our perspective, the dashboarding performance starts at compile-time, with scripts backing the dashboards.

Moreover, most analytical tools postpone a large portion of the calculation until a dashboard (sometimes called a report) is requested. Unfortunately, the design also invariably leads to performance issues when the number of concurrent end-users increases, as there is an unavoidable conflict for the server-side computing resources that are mutualized to serve all the end-users.

Lokad extensively mitigates this problem by pre-computing dashboards. Our design minimizes the server-side resources needed – upon end-user demand – to serve a dashboard, leaving the content store as the primary (but intended) bottleneck to serve the dashboarding data. This design ensures that a large number of end-users can be concurrently served dashboards with minimal performance degradation.

Projects

The projects service manages scripts and batch jobs (called sequences). This service features a hierarchy of projects. End-users, who have the proper access rights, can view, edit, and run projects. A bespoke predictive optimization app implemented by Lokad typically includes a list of projects.

Event sourcing is used to persist user entries, leveraging the event store detailed here. Every single command or interaction issued to the service is logged and turned into a resulting event. The user interface presents the state obtained by collating all the events. This approach is known as a CQRS+ES pattern. CQRS stands for Command and Query Responsibility Segregation, while ES stands for Event Source.

The CQRS+ES pattern provides by design a complete historization of all the changes introduced in the application. In the specific case of Lokad, it provides a Git-like versioning of all changes ever applied to the Envision scripts that exist inside the account.

Lokad vs mainstream

In terms of UI and UX, the projects service follows the mainstream approach. Under the hood, however, the CQRS+ES pattern is used as an alternative to the CRUD (create, read, update, delete) one used for most enterprise software. This pattern replaces the relational database with an event store (discussed below).

The CQRS+ES approach offers many benefits over the CRUD pattern. For example, it offers superior semantics, superior auditability and, in the case of Lokad, superior performance as it allows us to extensively customize the persistence strategy used to store and retrieve Envision source code. In fact, the bulk of the data to be persisted by the projects service consists of Envision source code.

Code

The code service features an IDE (integrated development environment) dedicated to the Envision language. This web-based IDE has all the features and functionality expected from a modern development environment, such as code coloring, autocompletion and a series of code actions (ex: variable renaming), made possible through static code analysis.

The bulk of the code service’s complexity lies in its language server backend that provides real-time feedback: hints for autocompletions, errors, or code actions at each keystroke. In particular, one of the key technical challenges consists of maintaining low latency for this function, as delays would be rather noticeable when considering the pace of normal keyboard interactions

Files

Each account at Lokad has its own space to store files. The files service features a distributed versioned file system that is used to manage its files. This file system can be accessed through a web interface and SFTP and FTPS protocols. Conceptually, this file system is largely similar to a Git repository, except that it is intended for gigabyte-sized flat files.

File versioning is ensured through the CQRS+ES pattern, which complements the presentation of a sequence of “commits”, reflecting the evolution of the file system itself. The persistence of the files’ content is ensured through a content addressable store (discussed below).

By versioning both the code (Envision scripts) and the data, Lokad offers complete reproducibility of past behaviors for the supply chain apps deployed on its platform. From a supply chain perspective, this capability is important to make sure that every anomalous result generated by the supply chain app can be troubleshot.

Lokad vs mainstream

The files service is tightly integrated with the Envision language (providing correctness benefits), the Envision IDE (providing productivity benefits), and the Envision runtime (providing performance benefits). These benefits would be difficult to achieve with a general-purpose file system, a software piece that is first and foremost a companion of the kernel.

Moreover, many of the benefits of the files service are gained by doing less than a general-purpose file system. For example, the files service does not allow a file to be concurrently updated by several processes. Such features, in the specific context of supply chain apps, only expose supply chain practitioners to IT-centric issues and complexities without providing value in exchange.

Accounts

Identity and Access Management (IAM) of the accounts service is one of the most mainstream parts of Lokad. It manages the Lokad accounts, the Lokad users, the authentication (ideally, delegated authentication), and the ACL (Access Control List) that controls what users can or cannot do with a Lokad account.

This service also leverages the CQRS+ES pattern, which offers complete audit logs of all IAM operations - operations that are always security-sensitive due to the very nature of IAM. Using the event source as the audit log also removes entire classes of security problems, such as the audit log becoming compromised by not having selected events recorded in it.

The persistence layer

The persistence layer, as the name suggests, ensures the persistence of all the data managed by Lokad. These data come in two distinct flavors: first, the files - either uploaded by clients to Lokad or generated through Envision scripts; second, the events resulting from user operations (including automated agents, such as a file-upload script). Lokad persists both data flavors through Azure Blob Storage acting as a key-value store.

Event Store

The event store persists the events generated by all the Lokad platform services. This store represents the state-transition database of Lokad. We have released the source code of our event store as open source.

This component is simple: it only persists and serves events, in a reliable manner, leveraging an underlying distributed key-value store (Azure Blob Storage). The events are expected to be small - capped at 512kB, but typically less than 1kB.

There are no “smart” features, such as streaming analytics or projections management. Again, by doing less, Lokad removes entire classes of issues, such as SQL injection attacks, that arise due to the capabilities of the persistence layer.

Content Addressable Store

The content addressable store (CAS) persists the files, either uploaded to the Lokad platform or generated through the Envision scripts. We have released the source of our content addressable storage as open source.

The CAS is intended to support large files, typically of several MB, with an upper limit of 100GB. The CAS is used to store files, or file fragments, sharded following a columnar storage strategy. The columnar storage is aligned with the access pattern of the execution layer.

The execution layer

As per its name, the execution layer ensures the execution of Envision scripts within the Lokad platform, and it includes a series of components. For the sake of brevity, we will list here only the most notable ones. The compiler transforms Envision scripts into instructions intended for a distributed virtual machine. The scheduler is a utility service to trigger, schedule and sequence Envision scripts. Thunks is the codename for the Envision virtual machine. Finally, Ionic is the name for the columnar storage strategy, produced and consumed by the Envision virtual machine.

Compiler

The compiler transforms Envision scripts into bytecode intended for the distributed virtual machine of Lokad’s platform – named “Thunks” (see below). The architecture of this compiler follows mainstream design practices: a compilation pipeline that starts with parsing followed by a series of transformations from one internal representation to the next, ending with the production of bytecode.

The language server backend, which guides the interactions with the Envision source code (see the Code service, above), is also part of the compiler. The language server is stateful in order to provide meaningful feedback and error messages to the Envision programmer, as coding is in progress. After most keystrokes, the script is not a valid Envision script (yet), but thanks to the language server’s state, meaningful feedback is nevertheless provided.

Scheduler

The scheduler is a utility service for executing Envision scripts without manual interventions. The scheduler triggers, schedules and sequences the execution of Envision scripts. It also provides alerting capabilities if the executions deviate from their expected timelines. The tight integration of the scheduler with the rest of the platform provides a series of desirable features, such as file-change triggers (to start Envision scripts upon receipt of files, for example), or the early detection of pending failure (if one of the Envision scripts within a sequence does not compile).

Thunks

Thunks is the codename of Lokad’s distributed virtual machine. In fact, Envision scripts natively benefit from a distributed execution. In this sense, the Envision compiler does not target a single machine but a cluster of them.. This feature is critical for processing large datasets in a timely fashion. The Envision language was specially designed for automatic parallelization (a feature that is otherwise very difficult to achieve with a general programming language). Incidentally, the cluster also provides a higher reliability of script execution if one machine becomes unresponsive.

The cluster of machines dedicated to Thunks is pooled, in a multi-tenant fashion, across all the accounts. This feature is essential to keeping the computing costs under control. The typical supply chain use case involves one execution batch per day, typically lasting less than 60 minutes. Pooling the resources through multi-tenancy largely mitigates the overhead associated with computing resources - a cost that would otherwise be charged in 24-hour periods, while only an hour (possibly less) would be utilized.

For an extensive explanation, we suggest Victor Nicollet’s 4-part series on the design of the Envision Virtual Machine, and for answers to the most common performance-related Lokad questions, we recommend section 8 of our Security FAQ.