Fallacies in data cleaning for (short-term) sales forecasts

When it comes to data analysis, experts frequently emphasize (and rightly so) the importance of having a clean dataset before starting any analysis. Otherwise, you end up with Garbage In, Garbage Out.

As a result, most forecasting toolkits provides extensive features to support data cleaning / data preparations; and yet, Lokad does not provide any explicit feature supporting data cleaning.

Have we missed something BIG here?

We don’t believe so. There are some misunderstandings when it comes to data cleaning for the purpose (short-term) sales forecasting. Indeed, nowadays, sales of most retailers, wholesalers, manufacturers are stored into either an ERP or some accounting system. In our experience, as of 2010, transactional data associated to sales are remarkably clean. If there is a transaction recorded November 1st, 2010 indicating that the product X has been sold in Y quantity, then, the probability for this information to true is very high, with a confidence above 99.9% for most sales processes.

Indeed, companies cannot afford not to know what they are selling. As a result, massive efforts have been invested in the last two decades to make really sure that sales data are reliable to some extent. We are not saying that no erroneous sales entry ever enter the system, we are only saying that the proportion is typically non-significant.

If sales data are clean, why are we still pushing efforts on data cleaning?

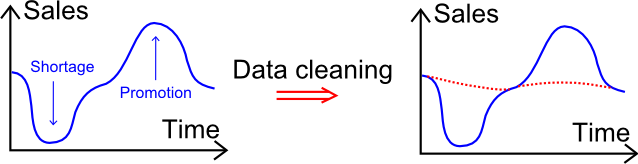

We have been observing a lot of data cleaning practices in the industry, and it turns out that the operations referred as cleaning tend to be much more than actually looking for the 0.1% erroneous transactions. The illustration here above gives some insights about the actual operations involved in a typical data cleaning phase: it’s all about smoothing the extremes. For example, partial sales during shortages are manually increased, and promotional/exceptional sales are caped.

Needless to say, we are not believers of this approach. Real sales data should not be replaced by fictitious sales data. Indeed, nothing can tell with 100% confidence how much products would have been sold if there had not been any shortage. The partial sales are the only tangible data that we have that does not already rely on statistical extrapolation.

Yet, there is one interesting side-effect of the smooth-the-extreme practice: smoothing improves the accuracy of the naive forecasting methods that behave much like the moving average.

It is tempting, if the only tool you have is a hammer, to treat everything as if it were a nail., Abraham Maslow, 1966

Trying to adjust the sales data to better fit on the only forecasting model on hand is just a bad case of the Law of the instrument. Our approach consists of tackling directly the complex patterns instead of trying to circumvent them.